But, it seems like FlywayDB does not have a tool for generate a migration files fitting its name convention for migration files. This post is about introducing my quick work that creatign a maven plugin to help user generate script file name having proper version info as prefix.

The code has be shared on Github. It named as FlywayFile as I am thinking it could be expanded to handle file operation stuff for FlywayDB in the future. Actually, I will ask if FlywayDB will accept this as one of their Maven plugin.

There are two eclipse maven projects in the repository. One the the main part, which is about maven plugin. The second is an extremely simple servlet, which can generate version number based on system time and random number.

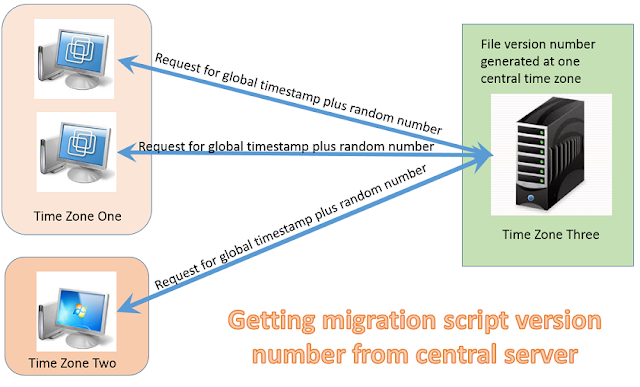

The reason for me to add this servlet here is that I think there might be case where we have developer from different time zone. But they share one version control system like subversion or git. So, in order to avoid confusion, we can use a central server to generate the migration script file name. However, this version code does not support complicate network environment yet. It is just for a prototype. I can improve it later if working in different time zones is really popular case. Below is a picture that can interprets this idea quickly.

To use this maven plugin, we need to do the following steps

- Installing this maven plugin into your local maven repository as I have not registered it with central maven plugin repository

- Adding "org.idatamining" plugin group into your project pom.xml file or global settings.xml file

- Adding property "flyway.SQL.directory" in your project pom.xml. This property specifies where the SQL migration script file will be created.

- Adding property "flyway.filename.generator" in your project pom.xml. This property is optional. It specifies the URL, where VersionNumber servlet listens.

- call the generate goal: mvn flywayFile:generate -DmyFilename=jia

4.0.0 org.idatamining testFly jar 1.0-SNAPSHOT testFly http://maven.apache.org /home/yiyujia/workingDir/eclipseWorkspace/testFly http://localhost:8080/versionGenerator/VersionNumber junit junit 3.8.1 test